Addicted to the Internet, don't you know that it's toxic?

Paraphrasing Britney Spears to consider new evidence that the Internet has always been a cold, dark, unfeeling place

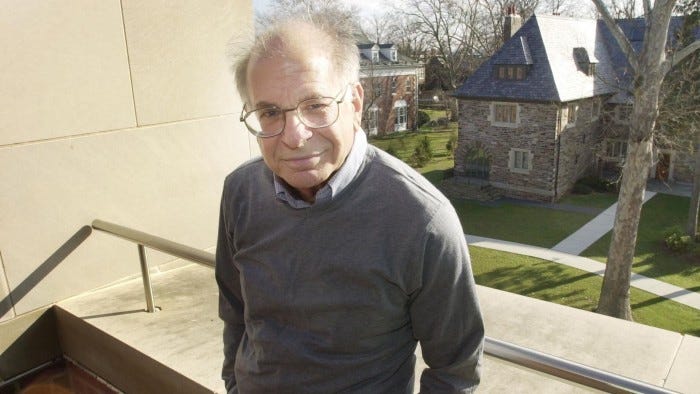

I read Daniel Kahneman’s Thinking, Fast and Slow ten years ago, and it made me feel a bit stupid. I found I could only read small sections at a time, as it was making my brain hurt. But, as you’d expect from a world-renowned, prize-winning book, sticking with it was so worth it—even if it meant adjusting my expectations on how I should read.

Even though it’s been ten years since I read it in full, plenty still sticks in my head. The concept, whose name I can never remember, describing the sensation of thinking about a particular subject, i.e. buying a red car, and then suddenly seeing red cars everywhere. It’s not that there are more red cars; it’s that you notice them because they’re on your mind. Also, the concept of “What you see is all there is” and that our own sphere of reference limits us humans.

Daniel Kahneman was on my mind this week, and not just because he recently passed away. Specifically, I’ve been thinking about heuristics: their prevalence and staying power.

Wikipedia defines heuristics, as they apply to psychology, as “simple, efficient rules…[they] explain how people make decisions, come to judgements, and solve problems”. Anchoring is a classic heuristic - “the tendency of people to make future judgements based too heavily on the original information applied to them”. For example, that video we commissioned last year cost £10k, so this new video will cost the same - even if the idea and level of production involved are vastly different.

The type on my mind this week was the representative heuristic. Representative heuristics are where we rely on preconceptions or perceived wisdom around a topic rather than the available evidence. It was on my mind after reading “Actually, the internet’s always been this bad”, a Substack write-up of new research completed in Italy.

The Italian researchers studied 30 years of internet comments, finding that “the toxicity level in online conversations has been relatively consistent over time, challenging the perception of a continual decline in the quality of discourse.”

Perceived wisdom goes that Gamergate, Brexit, and the Trump election drove massive negative engagement on Facebook and Twitter. Those companies’ algorithms then amplified that negativity, further entrenching people’s world views and creating combative “us vs. them” spaces online. I’m generalising, but you’ll be familiar with this kind of narrative.

This new study provides evidence to debunk that perceived wisdom. Looking at the high-level conversation graphs, you can see small, relatively recent spikes on Facebook and Twitter, but nothing statistically significant. In fact, the report backs up what the tech companies have been saying for years - toxic comments are only a tiny portion of the overall conversation, somewhere between 4 and 6% on average.

Interestingly, the study also found that the longer a thread or online conversation gets replies, the more toxic it often becomes.

But if this new study does indeed prove that the “online toxicity is worse than ever” argument isn’t true, why does that feel so counter to our experiences of having conversations online?

Scale certainly plays a part here—the sheer number of people now using platforms like Facebook and Twitter, vs. those using Usenet in the early days of the internet, means that 5% of posts is still quite a lot of posts.

As the report's authors point out, people conversing online in the 1990s were also extremely unlikely to have to deal with online swarms of negativity, coordinated bot attacks, and other forms of organised online activism designed to trigger algorithms and increase visibility. These concentrated bursts of activity tend to stick in the mind and fuel the perception that the internet is a toxic wasteland of antagonism and hatred.

The reality is, as ever, more complex. Online conversation may not be more toxic than ever, but that doesn’t give us a free pass to ignore online toxicity—particularly as it disproportionately affects women, ethnic minorities, and other minority groups.

Similarly, the perceived wisdom of the internet being a dumpster fire is unhelpful, too—the current answers to reducing that online toxicity tend to centre around increased regulation or finding a new platform that doesn’t carry the baggage of the past.

Neither of those two options appears very effective. Regulation inevitably becomes a game of whack-a-mole: find and report harmful content and force platforms to take it down. Even if a service like Threads or Bluesky managed to capture the collective imagination like Twitter did, there’s nothing in their design that means they’ll be any different or better. Based on the findings of the Data and Complexity for Society Lab, new platforms are doomed to repeat the same mistakes of the past.

As ever, with complex problems, there’s no easy solution. However, as Caitlin Dewey points out, this latest research highlights the need for more consistent, proactive moderation of online spaces to encourage more positive, constructive conversation. Ryan Broderick has beaten this drum for many years—regulation only goes so far.

Platforms need clear editorial policies around what is and isn’t acceptable, alongside the resources to implement those policies. That is obviously easier for specific, topic-based communities, like those you find on Reddit. It is also a challenge to implement at scale. But the easy options, the options that say, “Oh well, that’s humans for you” or “We believe in free speech”, simply don’t work. Better conversations stem from transparent moderation and clear guidelines on what can and can’t be posted.

That would be a dramatic, and unlikely, seachange in approach for most of the big tech conversation platforms. So until we see that kind of radical change, we all need to get comfortable with this constant, low-level, but too-loud-to-ignore hum of online toxicity.

And it also means that brands and businesses must prepare to handle those concentrated bursts of negativity designed to be too loud to ignore. The critical factor in this preparation is swift, effective triage. Being able to quickly discern whether this loud burst of comments is simply noise we can safely filter out. Or if there is a need to act quickly and decisively because of the people involved, the subject matter it covers, or the level of media interest.

When going through that triage process and making those decisions, it’s important for businesses to remember some of those concepts from Thinking Fast and Slow: being aware of heuristics that lack reliable evidence, not falling into the trap of “what we see is all there is,” and most importantly, not letting our instinctive, emotional “system one” rule over the more analytical “system two.”

Just because the CEO is upset and wants to see some action doesn’t mean that is the right course to take. A kneejerk reaction is likely to fan the flames and create an even more toxic cloud around the issue. That toxicity will likely take a long time to clear and clean up and make everyone feel a bit stupid.